At the Symposium on Cloud Computing conference (SoCC) 2015, Kirill Bogdanov presented our work on performance debugging of replica selection algorithms in geo-distributed storage systems. We found bugs in widely-used systems, such as Cassandra and MongoDB.

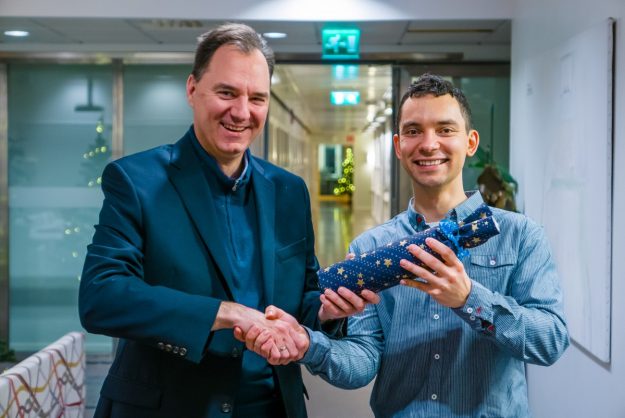

Kirill entered the Student Research Competition at SIGCOMM 2015 and described the work with this three-minute video

[db-video id=”04o6v4e3″]

The full abstract is as follows:

Modern distributed systems are geo-distributed for reasons of increased performance, reliability, and survivability. At the heart of many such systems, e.g., the widely used Cassandra and MongoDB data stores, is an algorithm for choosing a closest set of replicas to service a client request. Dynamically changing network conditions pose a significant problem, with suboptimal replica choices resulting in reduced performance due to increasing response latency. In this paper we present GeoPerf, a tool that tries to automate the process of systematically testing the performance of replica selection algorithms for geo-distributed storage systems. At the core of our approach is a novel technique that combines symbolic execution and lightweight modeling to generate a set of inputs that can expose weaknesses in replica selection. As part of our evaluation, we analyzed network round trip times between geographically distributed regions of the Amazon EC2 cloud, and compute how often the order of nearest replicas changed per day from any given region’s perspective. We tested Cassandra and MongoDB using our tool, and found bugs in each of these systems. Finally, we use our collected Amazon EC2 latency traces to study the behavior of these buggy replica selection algorithms under realistic circumstances. We find that significant time was lost due to programming errors. For example due to the bug in Cassandra, the median wasted time for 10% of all requests is above 50 ms.