Foundations of Data Science

As the world becomes increasingly digital, large amounts of data are collected across a wide range of human activities, engineering applications, and natural sciences. Data science has emerged as a key discipline to enable transforming the available data into knowledge products that bring insights into the corresponding domains, improve decision making, and accelerate scientific discovery.

At KTH we study the foundations of data science, with emphasis on developing the underlying theory, designing novel computational methods, and exploring applications in software engineering, language technology, natural sciences, and social-network analysis, among others.

Knowledge discovery

We develop novel methods to extract knowledge from data, model large-scale complex systems, and explore new application areas in data science. Areas of interest include but are not limited to combinatorial and statistical methods for knowledge discovery, efficient methods for big data management, optimization for machine learning, analysis of information and social networks, fairness, accountability and transparency in learning systems, and structured knowledge discovery in life sciences such as digital pathology.

Language technology

We work on questions that involve the analysis and processing of human language at realistic scale and realistic ranges of variation. Usage of human use exhibits obvious and observable regularities and we want to design representations of language to be able to handle how language usage changes over time, between users, topics, channels, and situations. We are interested in building models that are both practical for the application at task and have explanatory power.

Machine learning in software engineering

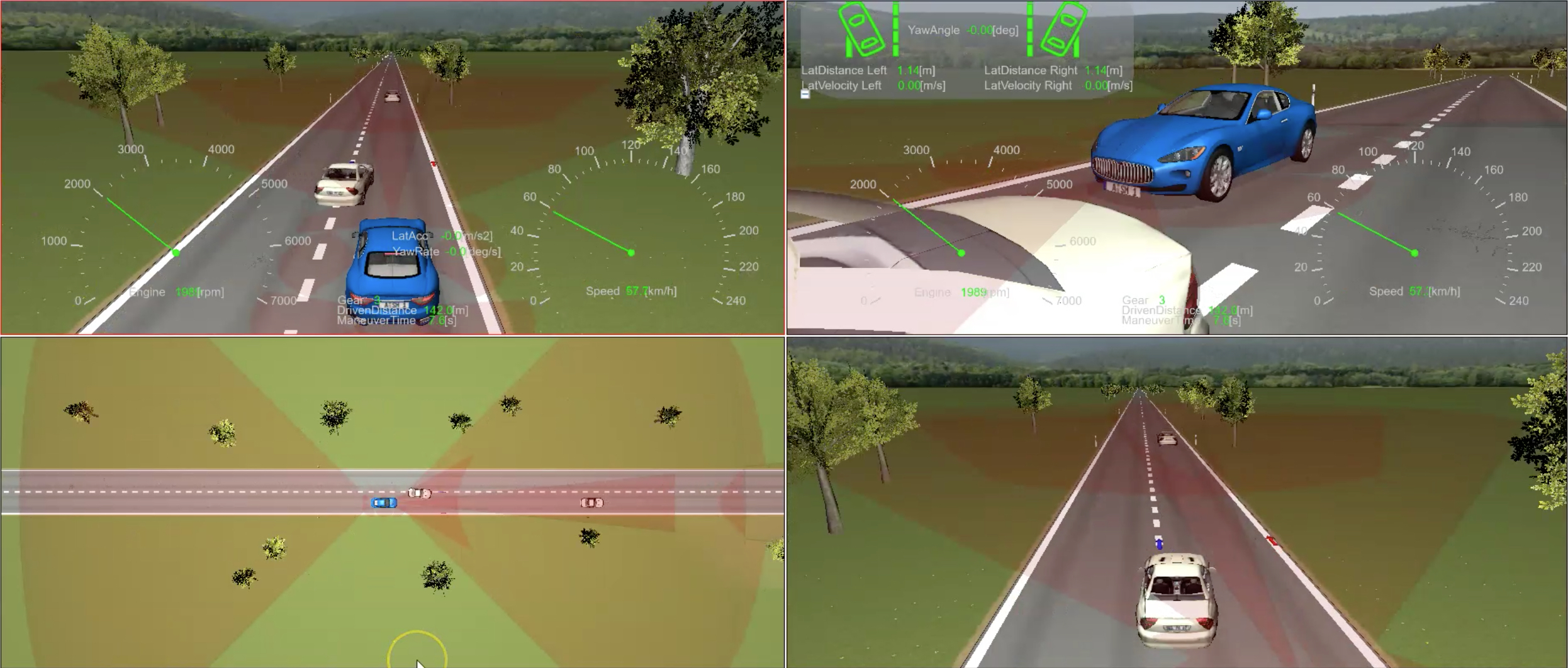

We are developing a general mathematical theory and efficient machine-learning algorithms for learning-based testing of reactive systems. We are applying active machine learning to reverse-engineer dynamic models of multi-vehicle use-cases from software-in-the-loop (SIL) and hardware-in-the-loop (HIL) vehicle simulations. We are also applying machine learning to reverse engineer software requirements and models from code. Serving machine learning models in real time is investigated through the development of new programming systems for building and running distributed and streaming applications.

Massive graph processing

We develop algorithmic and complexity-theoretic techniques to process modern graph data that are sheer in volume, come from a variety of sources, and evolve at a high velocity. Areas of interest include but are not limited to fast static, dynamic, distributed, streaming, and learning-based graph algorithms.

Privacy-preserving data analysis

The recent confluence of digitalization, increasingly data-heavy technologies, advances in machine learning, and legal regulations has turned privacy into a great challenge. Analysis performed on data that (potentially needlessly) allows even unintended inferences on personal data is leading to privacy violations, which in turn prevents sharing and learning from data. To solve these problems, we need 1) quantitative and legal privacy risk and utility assessments and 2) mechanisms for data transformation and learning that improve the results of these assessments. In particular, we investigate the use of synthetic data to strike a balance between privacy and utility/accuracy.

Participants

|

Model-based testing Concurrent software |

|

| Privacy preserving data anlaysis | |

|

Programming models for big data processing Large-scale distributed programming |

|

|

Knowledge discovery Social network analysis |

|

|

Language technology |

|

|

Language technology |

|

|

Machine learning in software engineering, ML in digital pathology |

|

|

Massive graph processing Social network analysis |