MUSAiC - Music at the Frontiers of Artificial Creativity and Criticism

Artificial intelligence (AI) is an especially disruptive technology, impacting a growing number of domains in ways both beneficial and detrimental. It is even showing surprising impacts in the Arts, provoking questions fundamental to philosophy, law, and engineering, not to mention practices in the Arts themselves. MUSAiC is an interdisciplinary research venture confronting questions and challenges at the frontier of the AI disruption of music.

PROJECT WEBSITE

From October 2020 – 2025, MUSAiC will analyze, criticize and fundamentally broaden the AI transformation of three interrelated music practices: 1) listening, 2) composition and performance, and 3) analysis and criticism. For each practice, and grounded in two specific music traditions (Irish and Swedish), MUSAiC will document and critically analyze the impacts of and ethical issues surrounding AI. MUSAiC will formulate and implement the first music pedagogy for AI, the lack of which continues to result in the creation of AI systems that have only a surface knowledge of music. From this pedagogy, MUSAiC will develop new holistic methods for understanding and benchmarking AI, and improving them and their application. It will implement and test novel AI systems that dynamically adapt to specific users as “digital apprentices”, thus bringing human-AI music partnerships to new levels of fruitfulness.

The outcomes of MUSAiC will facilitate applications of AI to music in robust and responsible ways, impacting a wide variety of stakeholders. It will not only prepare music practitioners and audiences of the present (human and artificial) for new ways of listening, working, appraising, and developing the art form, but will also pave the way for analyzing, criticizing and broadening the AI transformation of the other Arts.

Postdoc: to be advertised Spring 2022

PhD 1–3: to be advertised Dec. 2020

Funding: European Research Council Consolidator grant (ERC-2019-COG No. 864189)

Duration: 2020-10-01 - 2025-09-30

Proceedings of the 2020 Joint Conference on AI Music Creativity

Published with ISBN 978-91-519-5560-5, DOI: 10.30746/978-91-519-5560-5

Tribute to Professor Robert Keller video

Matthew Caren. TRoco: A generative algorithm using jazz music theory pdf video

Jean-Francois Charles, Gil Dori and Joseph Norman. Sonic Print: Timbre Classification with Live Training for Musical Applications pdf video

Jeffrey Ens and Philippe Pasquier. Improved Listening Experiment Design for Generative Systems pdf video

DEMO Joaquin Jimenez. Creating a Machine Learning Assistant for the Real-Time Performance of Dub Music pdf video

Rui Guo, Ivor Simpson, Thor Magnusson and Dorien Herremans. Symbolic music generation with tension control pdf video

Zeng Ren. Style Composition With An Evolutionary Algorithm pdf video

Raymond Whorley and Robin Laney. Generating Subjects for Pieces in the Style of Bach’s Two-Part Inventions pdf video

Jacopo de Berardinis, Samuel Barrett, Angelo Cangelosi and Eduardo Coutinho. Modelling long- and short-term structure in symbolic music with attention and recurrence pdf video

WIP Aiko Uemura and Tetsuro Kitahara. Morphing-Based Reharmonization using LSTM-VAE pdf video

DEMO Richard Savery, Lisa Zahray and Gil Weinberg. ProsodyCVAE: A Conditional Convolutional Variational Autoencoder for Real-time Emotional Music Prosody Generation pdf video

Carmine-Emanuele Cella, Luke Dzwonczyk, Alejandro Saldarriaga-Fuertes, Hongfu Liu and Helene-Camille Crayencour. A Study on Neural Models for Target-Based Computer-Assisted Musical Orchestration pdf video

Sofy Yuditskaya, Sophia Sun and Derek Kwan. Karaoke of Dreams: A multi-modal neural-network generated music experience pdf video

Guillaume Alain, Maxime Chevalier-Boisvert, Frederic Osterrath and Remi Piche-Taillefer. DeepDrummer : Generating Drum Loops using Deep Learning and a Human in the Loop pdf video

Yijun Zhou, Yuki Koyama, Masataka Goto and Takeo Igarashi. Generative Melody Composition with Human-in-the-Loop Bayesian Optimization pdf video

WIP Joann Ching, Antonio Ramires and Yi-Hsuan Yang. Instrument Role Classification: Auto-tagging for Loop Based Music pdf video

DEMO Roger Dean. The multi-tuned piano: keyboard music without a tuning system generated manually or by Deep Improviser pdf video

Stefano Kalonaris and Anna Aljanaki. Meet HER: A Language-based Approach to Generative Music Systems Evaluation pdf video

Mio Kusachi, Aiko Uemura and Tetsuro Kitahara. A Piano Ballad Arrangement System pdf video

Mathias Rose Bjare and David Meredith. Sequence Generative Adversarial Networks for Music Generation with Maximum Entropy Reinforcement Learning pdf video

Liam Dallas and Fabio Morreale. Effects of Added Vocals and Human Production to AI-composed Music on Listener’s Appreciation pdf video

WIP Sutirtha Chakraborty, Shyam Kishor, Shubham Nikesh Patil and Joseph Timoney. LeaderSTeM-A LSTM model for dynamic leader identification within musical streams pdf video

Nick Collins, Vit Ruzicka and Mick Grierson. Remixing AIs: mind swaps, hybrainity, and splicing musical models pdf video

Lonce Wyse and Muhammad Huzaifah. Deep learning models for generating audio textures pdf video

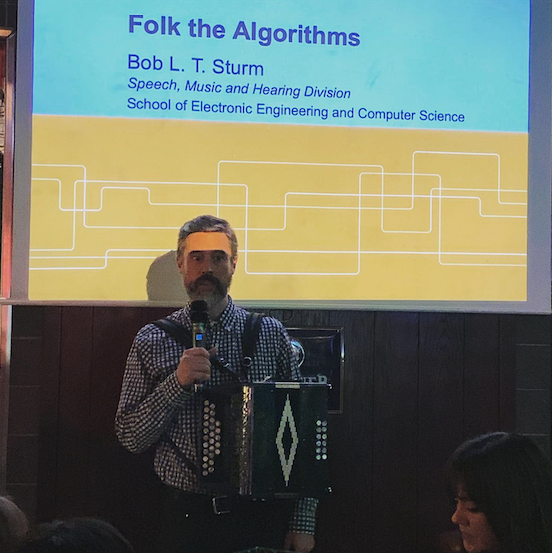

Nicolas Jonason, Bob L. T. Sturm and Carl Thomé. The control-synthesis approach for making expressive and controllable neural music synthesizers pdf video

Mathieu Prang and Philippe Esling. Signal-domain representation of symbolic music for learning embedding spaces pdf video

WIP Foteini Simistira Liwicki, Marcus Liwicki, Pedro Malo Perise, Federico Ghelli Visi and Stefan Ostersjo. Analysing Musical Performance in Videos Using Deep Neural Networks pdf video

Amir Salimi and Abram Hindle. Make Your Own Audience: Virtual Listeners Can Filter Generated Drum Programs pdf video

Grigore Burloiu. Interactive Learning of Microtiming in an Expressive Drum Machine pdf video

Germán Ruiz-Marcos, Alistair Willis and Robin Laney. Automatically calculating tonal tension pdf video

Hadrien Foroughmand and Geoffroy Peeters. Extending Deep Rhythm for Tempo and Genre Estimation Using Complex Convolutions, Multitask Learning and Multi-input Network pdf video

DEMO James Bradbury. Computer-assisted corpus exploration with UMAP and agglomerative clustering pdf video

Shuqi Dai, Huan Zhang and Roger Dannenberg. Automatic Detection of Hierarchical Structure and Influence of Structure on Melody, Harmony and Rhythm in Popular Music pdf video

Brendan O’Connor, Simon Dixon and George Fazekas. An Exploratory Study on Perceptual Spaces of the Singing Voice pdf video

Théis Bazin, Gaëtan Hadjeres, Philippe Esling and Mikhail Malt. Spectrogram Inpainting for Interactive Generation of Instrument Sounds pdf video

WIP Manos Plitsis, Kosmas Kritsis, Maximos Kaliakatsos-Papakostas, Aggelos Pikrakis and Vassilis Katsouros. Towards a Classification and Evaluation of Symbolic Music Encodings for RNN Music Generation pdf video

Sandeep Dasari and Jason Freeman. Directed Evolution in Live Coding Music Performance pdf video

Fred Bruford, Skot McDonald and Mark Sandler. jaki: User-Controllable Generation of Drum Patterns using LSTM Encoder-Decoder and Deep Reinforcement Learning pdf video

Hayato Sumino, Adrien Bitton, Lisa Kawai, Philippe Esling and Tatsuya Harada. Automatic Music Transcription and Instrument Transposition with Differentiable Rendering pdf video

WIP Darrell Conklin and Geert Maessen. Aspects of pattern discovery for Mozarabic chant realization pdf

WIP Samuel Hunt. An Analysis of Repetition in Video Game Music pdf video

WIP Gabriel Vigliensoni, Louis McCallum, Esteban Maestre and Rebecca Fiebrink. Generation and visualization of rhythmic latent spaces pdf video