Matteo Gamba

About me

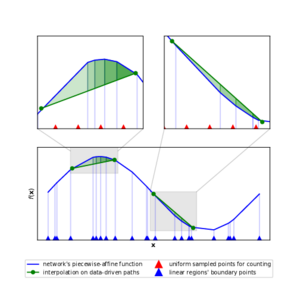

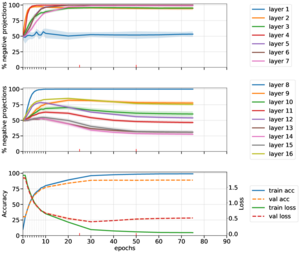

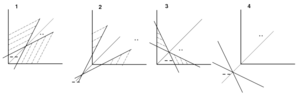

I am a doctoral student in Machine Learning and Computer Vision, working under the supervision of Prof. Mårten Björkman and Prof. Hossein Azizpour at the division of Robotics, Perception and Learning (RPL). My research is focused on investigating the factors of implicit regularization in deep discriminative learning, as imposed by network architecture, optimization and natural image data. My work so far has focused on the study of piece-wise affine functions, representing the hypothesis space of ReLU networks, and their complexity in relation to the double descent curve of the test error.

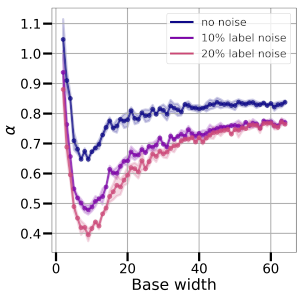

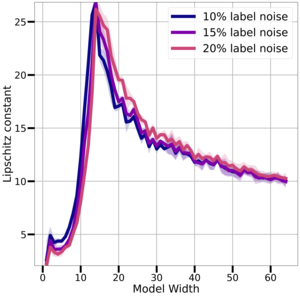

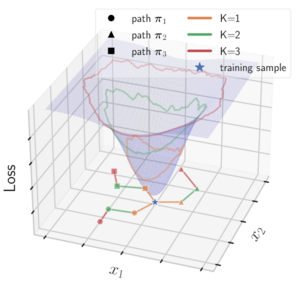

My most recent works study generalization of deep networks in relationship to interpolation of noisy training data, and relate complexity of the models' learned function to distance from initialization of their model parameters.

News

- [2023-11-07] Thank you, Heavy Tails workshop organizers at NeurIPS for awarding me a free conference registration!

- [2023-10-23] Our paper "On the Varied Faces of Overparameterization in Supervised and Self-Supervised Learning" was accepted at the Heavy Tails workshop at NeurIPS!

- [2023-08-25] Our paper "On the Lipschitz Constant of Deep Networks and Double Descent" was accepted as Oral to BMVC!

- [2023-03-13] Our paper "Deep Double Descent via Smooth Interpolation" was accepted to TMLR!

- [2023-02-23] I have been nominated top reviewer for AISTATS 2023.

GPG Key

Use this key to send me encrypted emails. Please consider that the subject line is not encrypted. My key has fingerprint E020 8301 321C 8604 D029 7165 3069 2CE0 82E5 D321.

Publications

|

Gamba, M., Ghosh, A., Agrawal, K., Richards, B., Azizpour, H., Björkman, M. "On the Varied Faces of Overparameterization in Supervised and Self-Supervised Learning". NeurIPS workshop Heavy Tails,2023. [pdf] |

|

Gamba, M., Azizpour, H., Björkman, M. "On the Lipschitz Constant of Deep Networks and Double Descent". British Machine Vision Conference (BMVC), 2023. |

|

Gamba, M., Englesson, E., Björkman, M., Azizpour, H. "Deep Double Descent via Smooth Interpolation". Transactions on Machine Learning Research, 2023. |

|

Gamba, M., Azizpour, H., Björkman, M. "Overparameterization Implicitly Regularizes Input-Space Smoothness". NeurIPS workshop INTERPOLATE, 2022. |

|

Gamba, M., Chmielewski-Anders A., Sullivan, J., Azizpour, H., and Björkman, M. "Are All Linear Regions Created Equal?". In International Conference on Artificial Intelligence and Statistics (pp. 6573-6590). PMLR. 2022. |

|

Gamba, M., Carlsson, S., Azizpour, H., and Björkman, M. "Hyperplane Arrangements of Trained ConvNets Are Biased." arXiv preprint arXiv:2003.07797, 2020. |

|

Gamba, M., Azizpour, H., Carlsson, S., and Björkman, M. "On the Geometry of Rectifier Convolutional Neural Networks." In Proceedings of the IEEE International Conference on Computer Vision Workshops, 2019. |

Teaching

I have served as a Teaching Assistant for the following Master-level courses:

- Deep Learning, Advanced Course (2019 - present)

- Deep Learning in Data Science (2019 - present)

- Artificial Neural Networks and Deep Architectures (2017-2019)

- Computer Security (2016-2019)

I have been in charge of the Machine Learning reading group from 2019 to 2022, together with a fellow student.

System Administration

Together with two fellow doctoral students, since 2019 I have been administering a SLURM-based GPU cluster with a hundred GPUs, providing compute to the RPL division.