AI created more than 100,000 pieces of music after analyzing Irish and English folk tunes

At turns lively and yearning, the traditional folk musics of Ireland and Britain have made their mark around the world. Now this perennially popular music is helping computers learn to become a new kind of partner in music creation.

A machine learning system overseen by a researcher at KTH Royal Institute of Technology has produced 100,000 new folk tunes to date, generating a diverse range of reactions from folk musicians and the public. Some of the music can even be heard on a newly-released album by an Irish folk group.

Bob Sturm, associate professor of computer science in the Department of Speech, Music and Hearing , says that the main idea of the project was to train computer models on folk music, so that they appear to have some musical intelligence, and then to “devise methods to unravel what they are actually doing,” he says.

The research subsequently led to creative opportunities.

“Our work with many collaborators, such as composer Oded Ben-Tal at Kingston University in the UK, and professional musicians, has also shown how the models can serve a wider purpose: as useful partners in creating new music,” Sturm says.

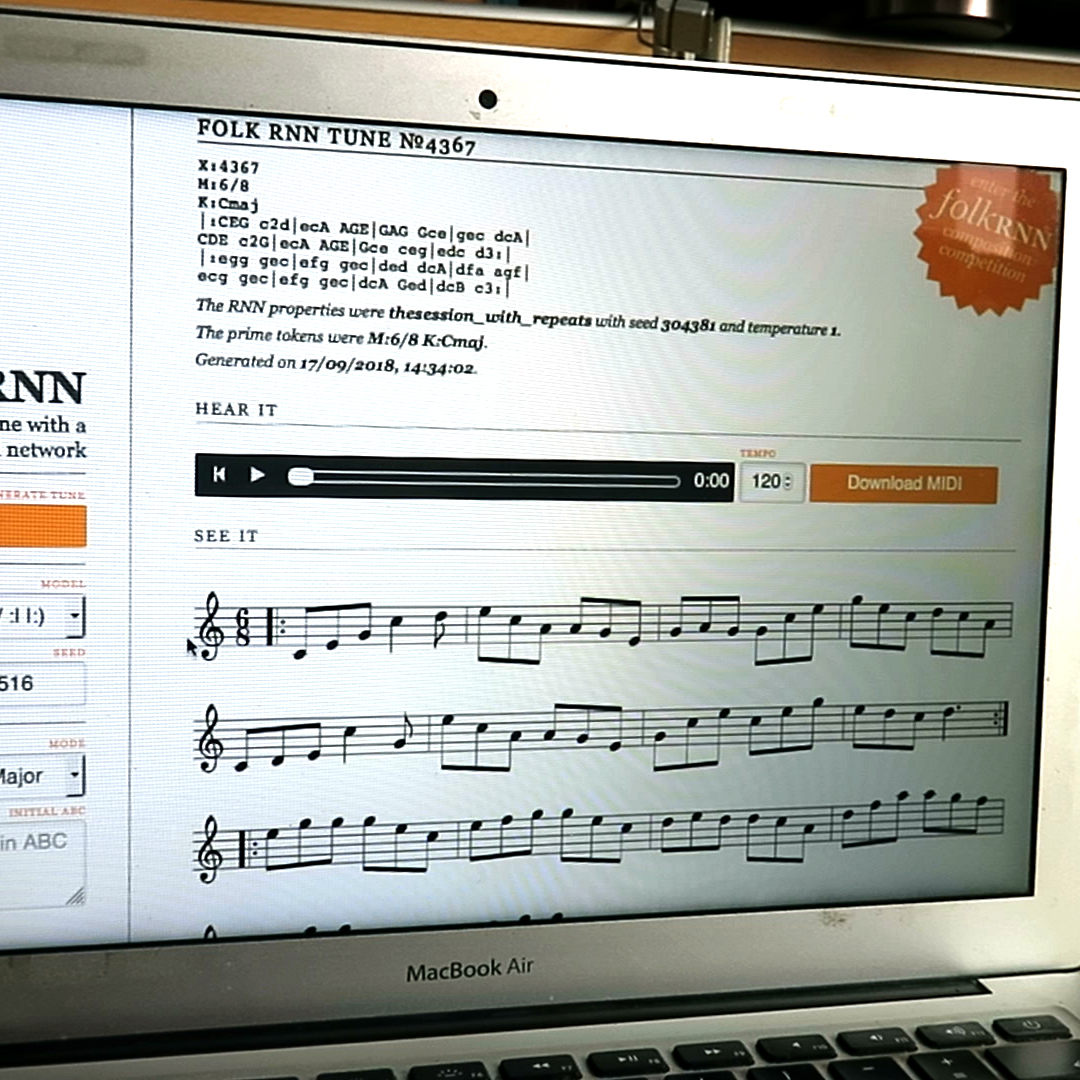

The project uses an off-the-shelf artificial intelligence method called a recurrent neural network (RNN), which essentially predicts what comes next based on what it has previously seen. For training data, the team drew upon the website, thesession.org , which contains tens-of-thousands of tunes transcribed by people using a short-hand language designed for folk music.

“The resulting computer models show some ability to repeat and vary patterns in ways that are characteristic of this kind of music,” Sturm says. “It was not programmed to do this using rules – it learned to do so because these patterns exist in the data we fed it.”

To test the plausibility of the generated tunes, Sturm and Ben-Tal challenged a group of professional Irish traditional musicians to create an album of folk music drawing upon existing tunes and the 100,000 generated by their computer models. The result is a full-length album on which over half of the music is computer-generated . Sturm and Ben-Tal then released the album online in order to solicit reviews and comments from professionals and the public. “We had to make up a story about the album’s origins in order to avoid the bias that can result when someone believes a creative product was created by a computer,” Sturm says. “And now that we have reviews, we are revealing the true origins of the album.”

These computer models aren’t about to elbow human composers aside any time soon, Sturm says. “Music is and always will be a human activity. Our models are merely generating sequences of symbols that require trained musicians to transform into music, or even to decide not to waste the time.

“But strange, unexpected, and sometimes wonderful things occur when models venture outside their limited knowledge,” he says. “If we push a model just a bit away from patterns it has seen, it can fail catastrophically. Unlike a human, the system isn’t able to generalize beyond a very specific context.” Much of what the researchers originally thought the system learned about key features of folk music, such as variation and repetition, it hadn’t learned after all.

The models’ idiosyncratic knowledge of music notwithstanding, Sturm and his colleagues are the pursuing the question of whether they can augment music creation. They created an online implementation at folkrnn.org , where anyone can explore the models for themselves. They also created an online project called The Machine Folk Session , a growing collection of machine-generated folk music, which much like thesession.org serves as a collection of real music.

“It will be interesting to see if this collection is used to train future generations of computer models,” Sturm says.

David Callahan