School of Electrical Engineering and Computer Science

The School of Electrical Engineering and Computer Science (EECS) is one of five schools at KTH Royal Institute of Technology. We conduct internationally prominent research and education in several areas such as electrical engineering, computer science, intelligent systems and human centered technology. Here you can read about our research, education and the latest news at EECS. Located at KTH Campus in Stockholm.

EECS Departments

Latest news from EECS

AI is changing journalism – KTH contributes with contract education

AI is already affecting how news is being produced, disseminated and consumed – often through technology and platforms that journalists themselves have no control over. At a time when algorithms contr...

Read the article

KTH researchers awarded IEEE fellow

Lina Bertling Tjernberg, Professor in Electrical Power Systems, and Martin Monperrus, Professor in Software Engineering, have been elevated as IEEE Fellows Class 2026. Both are with KTH and School at ...

Read the article

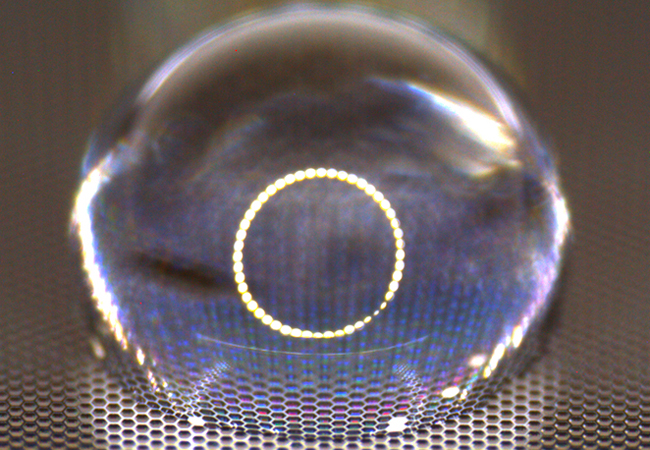

What is the rare interstellar object made of? New observations give clues

A mysterious visitor from outside our solar system has once again captured global attention — and researchers from KTH Royal Institute of Technology played a key role in uncovering what it really is.

Read the articleMore news

- AI is changing journalism – KTH contributes with contract education

27 Jan 2026

- KTH researchers awarded IEEE fellow

16 Dec 2025

- What is the rare interstellar object made of? New observations give clues

13 Nov 2025

- Prestigious IEEE award to EECS associate professor

22 Oct 2025

- Taking Humour Seriously in Graduate Training

24 Sep 2025

Calendar

-

Public defences of doctoral theses

Monday 2026-03-16, 09:00

Location: F3 (Flodis), Lindstedtsvägen 26 & 28, Stockholm

Video link: https://kth-se.zoom.us/w/63788305553

Doctoral student: Alfredo Reichlin , Robotik, perception och lärande

2026-03-16T09:00:00.000+01:00 2026-03-16T09:00:00.000+01:00 Interactive Representation Learning (Public defences of doctoral theses) F3 (Flodis), Lindstedtsvägen 26 & 28, Stockholm (KTH, Stockholm, Sweden)Interactive Representation Learning (Public defences of doctoral theses) -

Seminars and lectures

Monday 2026-03-16, 15:00 - 16:00

Location: Sten Velander, Teknikringen 33

Video link: https://kth-se.zoom.us/j/61800896727

2026-03-16T15:00:00.000+01:00 2026-03-16T16:00:00.000+01:00 Innovation practice at KTH - how to turn ideas into reality and make real world impact (Seminars and lectures) Sten Velander, Teknikringen 33 (KTH, Stockholm, Sweden)Innovation practice at KTH - how to turn ideas into reality and make real world impact (Seminars and lectures) -

Licentiate seminars

Tuesday 2026-03-17, 10:00

Location: Lindstedtsvägen 5, Room D37

Video link: https://kth-se.zoom.us/j/65756749078

Doctoral student: Leon Fernandez , Nätverk och systemteknik, CDIS

2026-03-17T10:00:00.000+01:00 2026-03-17T10:00:00.000+01:00 Black-Box Fuzz Testing for Security in Service-Provider Networks (Licentiate seminars) Lindstedtsvägen 5, Room D37 (KTH, Stockholm, Sweden)Black-Box Fuzz Testing for Security in Service-Provider Networks (Licentiate seminars)